In the digital era, bots have become an integral part of various online activities, ranging from data scraping and automated testing to social media management and beyond. However, running bots effectively and ethically often involves navigating complex challenges, particularly concerning IP bans, rate limits, and privacy concerns.

This is where the use of proxies becomes crucial. This article explores the necessity of proxies in bot operations, highlighting how they can enhance functionality and ensure compliance with legal and ethical standards.

Table of Contents

The Role of Proxies in Bot Operations

When deploying bots for tasks like web scraping, data collection, or automated interactions, one of the primary challenges is avoiding detection and blocking by websites. Proxies from Infatica, for instance, play a pivotal role in this context. These proxies act as intermediaries between the bot and the target website, masking the bot’s original IP address and thereby reducing the risk of detection and IP bans.

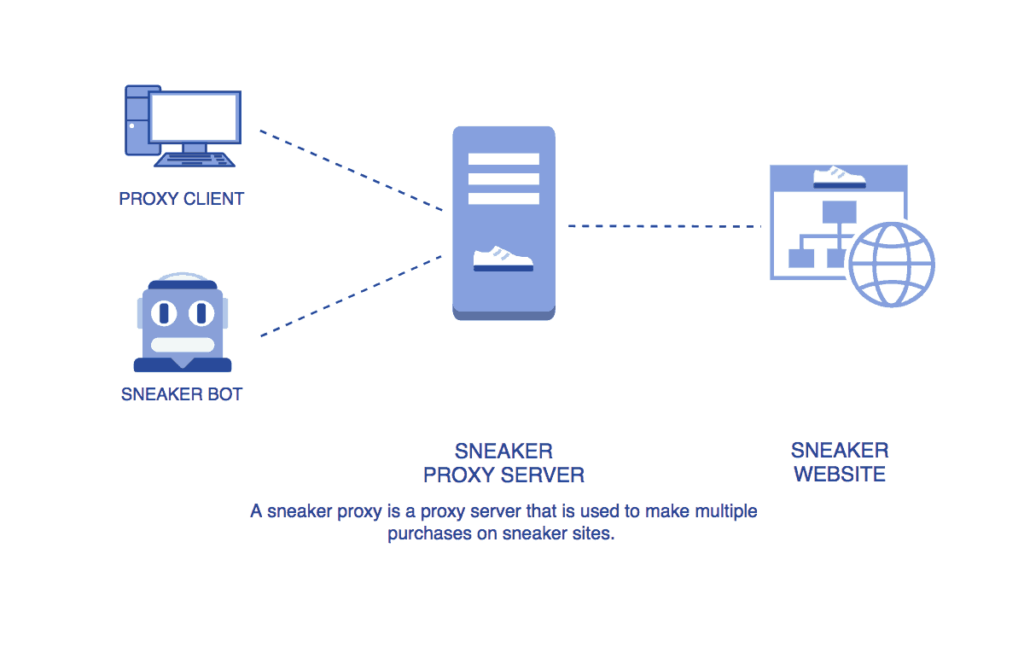

Here is, for example, how a proxy works with sneaker bots:

By using a pool of diverse IP addresses provided by Infatica, bots can operate more discreetly, accessing data or performing tasks without triggering security protocols that often block repetitive requests from a single IP address.

Benefits of Using Proxies in Bot Operations

The use of proxies in bot operations offers a multitude of benefits that extend beyond simple IP masking. These advantages are critical in ensuring that bots can perform their tasks efficiently, ethically, and securely. Let’s delve deeper into these benefits.

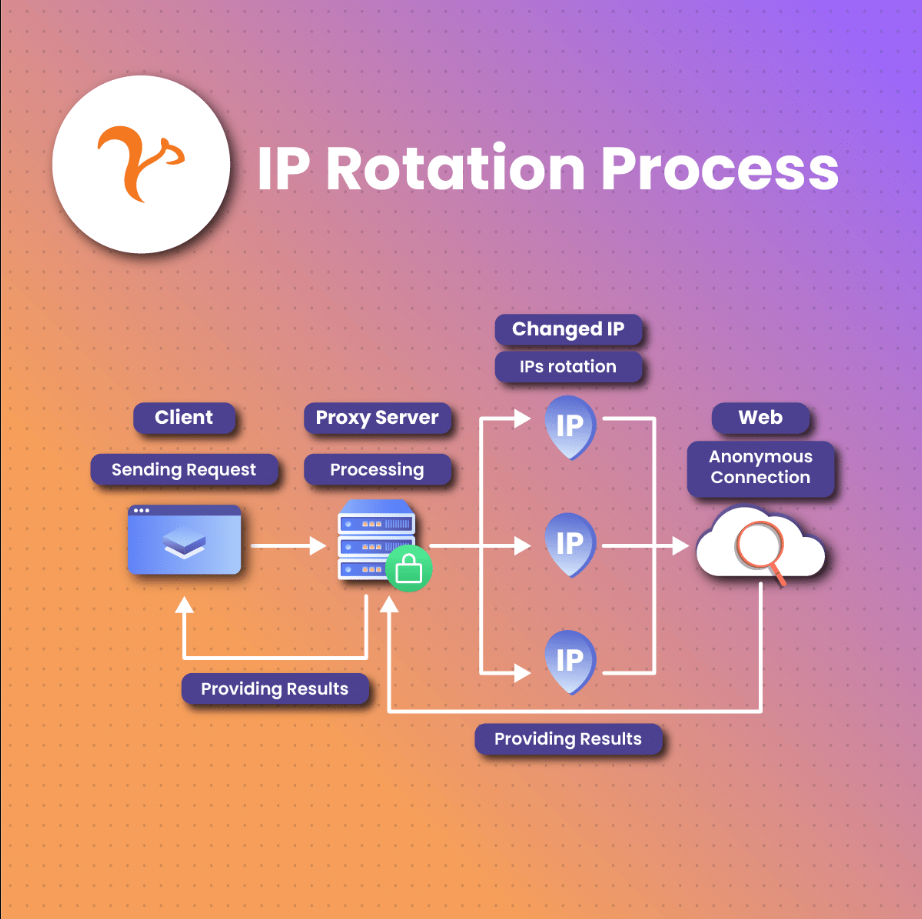

IP Rotation for Avoiding Bans

- Enhanced Operational Continuity: By rotating IP addresses, proxies ensure that bots can continue their operations uninterrupted, even when some IPs get banned or blocked. This continuity is vital for tasks that require consistent uptime, such as continuous data scraping or monitoring.

- Diverse IP Pool: Proxies often provide access to a vast pool of IP addresses from various regions, which helps in simulating access from different users across the globe. This diversity is crucial in scenarios where data or access points vary based on user location.

Geo-targeting Capabilities

- Access to Localized Content: Proxies enable bots to access and interact with content that is tailored to specific geographic locations. This is particularly useful for businesses that need to gather market-specific data or test their services in different regions.

- Overcoming Censorship and Blocks: In regions where certain services or information are censored or blocked, geo-targeted proxies allow bots to bypass these restrictions, ensuring access to a broader range of data and content.

Improved Anonymity and Security

- Protection Against Backtracking: By masking the originating IP address, proxies protect the bot operator from potential backtracking and identification, which is crucial for maintaining privacy and security.

- Secure Data Handling: When dealing with sensitive data, proxies provide an additional layer of security, ensuring that the data transfer between the bot and the target server is less vulnerable to interception or hacking.

Load Balancing to Prevent Server Overload

- Efficient Resource Utilization: Proxies help in evenly distributing the traffic generated by bots, ensuring that no single server bears excessive load. This efficient distribution is key to maintaining optimal performance and avoiding service disruptions.

- Reduced Risk of Service Denial: By preventing server overload, proxies reduce the risk of unintentional Denial-of-Service (DoS) situations, which can occur when a server receives more requests than it can handle.

Enhanced Performance and Speed

- Faster Response Times: Some proxies are optimized for speed, ensuring that the bots can perform tasks like data scraping or automated testing more quickly and efficiently.

- Bandwidth Savings: Proxies can cache data, reducing bandwidth usage and speeding up the retrieval of frequently accessed resources. This is particularly beneficial for bots that repeatedly access the same data points.

Considerations and Best Practices

While the advantages of using proxies in bot operations are significant, their effective and ethical utilization requires careful consideration and adherence to best practices. Here’s an expanded look at the key considerations and best practices for using proxies responsibly.

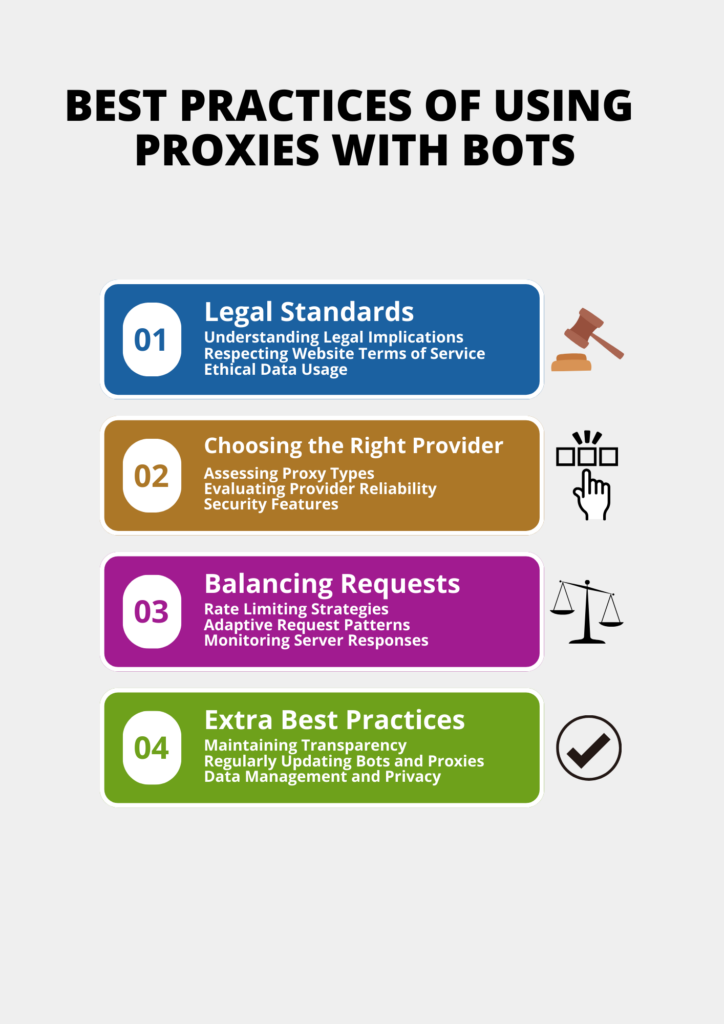

Complying with Legal Standards

- Understanding Legal Implications: It’s crucial to be aware of the legal landscape surrounding bot activities, especially in data scraping. Different countries and regions have varying laws regarding data privacy and digital rights, which must be respected.

- Respecting Website Terms of Service: Many websites have specific terms of service that prohibit or restrict automated data collection. It’s important to review and adhere to these terms to avoid legal complications.

- Ethical Data Usage: Beyond legal compliance, consider the ethical implications of data scraping. Ensure that the data collected is used responsibly and does not infringe on individual privacy rights.

Choosing the Right Proxy Provider

- Assessing Proxy Types: Different types of proxies (such as residential, data center, or mobile proxies) offer various features and levels of reliability. Choose the type that best suits your specific bot operation needs.

- Evaluating Provider Reliability: Research potential proxy providers thoroughly. Look for reviews, uptime statistics, and support options to ensure you choose a provider that is reliable and responsive.

- Security Features: Ensure that the proxy provider offers robust security features to protect your data and operations from potential cyber threats.

Balancing Requests

- Rate Limiting Strategies: Implement rate limiting in your bot’s code to control the frequency of requests. This helps in mimicking human browsing patterns and reduces the risk of being flagged as a bot.

- Adaptive Request Patterns: Consider varying the timing and volume of requests based on the target server’s load and response times. This adaptive approach can minimize the risk of overloading servers and appearing suspicious.

- Monitoring Server Responses: Regularly monitor server responses for signs of throttling or blocking. If such signs are detected, adjust the request rate accordingly to maintain a good relationship with the target server.

Additional Best Practices

- Maintaining Transparency: Where possible, be transparent about your use of bots and proxies. This can include declaring bot activities in user-agent strings or providing contact information for website administrators.

- Regularly Updating Bots and Proxies: Keep your bots and proxies updated to ensure they comply with the latest standards and practices, and to benefit from the latest security and performance enhancements.

- Data Management and Privacy: Be diligent in how you store, handle, and dispose of data collected through bot activities. Ensure robust data management practices to maintain confidentiality and integrity.

Conclusion

In conclusion, proxies are a vital component in the toolkit of modern bot operations. They provide the necessary cover and flexibility for bots to perform efficiently and responsibly. By using proxies, particularly those from reputable providers like Infatica, bot operators can enhance their capabilities while adhering to ethical and legal standards.

As the digital landscape continues to evolve, the intelligent use of proxies will remain a key factor in the successful deployment of bots across various online domains.